Moving Beyond CAP: A Developer’s Guide to the PACELC Theorem

Explore the powerful PACELC theorem and learn exactly why the foundational CAP theorem is insufficient for modern software design. Understand the crucial engineering trade-offs between system latency and data consistency to build vastly better distributed web architectures.

Let me tell you a story that almost every growing software developer experiences at some point in their career. You have an amazing idea for a new web application. You start building it locally on your laptop. You create a single web API and a single, beautifully simple relational database. When you test it on your machine, everything is incredibly fast, incredibly reliable, and perfectly in sync.

Then, your application goes live and starts to succeed. Users are logging in from New York, London, and Tokyo. Suddenly, your single database starts to sweat under the load. The users in Tokyo are complaining that saving their profile takes a painfully long time because their data has to travel across the globe to reach your solitary server.

You do what any logical application architect would do in this situation. You decide to add more database servers in different geographic regions to improve performance. This is the exact moment you enter the wild and confusing world of distributed systems. It is also the exact moment you realize that managing identical data across multiple servers is much more complicated than it initially looks.

When you start researching how to handle multiple databases, you will undoubtedly stumble upon the CAP theorem. You might spend hours reading articles about it, drawing diagrams on whiteboards, and finally feeling like you understand the core trade-offs. You feel ready to conquer the world of distributed architecture. But there is a massive secret that many senior developers only learn the hard way. The CAP theorem is missing a huge piece of the puzzle. It only talks about what happens when your system breaks down.

What about when everything is working perfectly?

This is exactly where the PACELC theorem comes in to save the day. It is a vital concept that completely changes how developers design and evaluate distributed architectures. Let us dive deep into why the CAP theorem falls short, what the PACELC theorem actually means, and how this knowledge will make you a significantly better system designer.

A Quick Refresher on the CAP Theorem

Before we can appreciate PACELC, we first need to briefly summarize the foundational CAP theorem. Proposed by computer scientist Eric Brewer in the year 2000, it states that any distributed data store can only provide two of the following three guarantees simultaneously.

First, we have Consistency. In a purely consistent system, every single read operation gives you the most recent write. If one server updates a user record, any subsequent read from any other server will reflect that exact update. Every single node in the network always sees the exact same truth at the exact same time.

Second, we have Availability. This means that every non-failing node in your system will return a valid response. A user request will never just hang indefinitely or return a failure error simply because the system is busy synchronizing data in the background. If a server is online and running, it will answer the user prompt.

Third, we have Partition Tolerance. A partition happens when a network failure prevents your regional servers from communicating with each other. For example, a fiber optic cable is damaged, and your London server can no longer talk to your New York server. Partition tolerance means your system continues to function overall despite these major network communication breakdowns.

The harsh reality of building distributed applications over the public internet is that network partitions will inevitably happen. Dedicated wires get cut by construction crews, vital network routers fail unexpectedly, and entire data centers occasionally lose outside connectivity. Because you cannot simply opt out of partition tolerance, you must always rely on it.

Because network partitions are a strict fact of life, the CAP theorem forces you to make a deeply painful choice whenever a network failure occurs. You must pick either Consistency or Availability.

If you choose Consistency during a network break, your system must proactively stop accepting requests on isolated nodes to prevent data mismatches. You actively sacrifice platform availability to guarantee logical data accuracy.

If you choose Availability during a network break, your system keeps accepting reading and writing requests everywhere. The catch is that the data might be temporarily inaccurate or out of sync between servers until the network connection is restored. You sacrifice strict consistency to ensure users can keep using the application without seeing error screens.

This is the famous CAP theorem trade-off. It is simple, logical, and universally taught in computer science programs.

The Glaring Problem with the CAP Theorem

Based solely on the concepts of the CAP theorem, your database architecture perfectly handles disaster scenarios. But let us be brutally honest for a second. How often do catastrophic network partitions actually happen in the real world? In modern cloud environments managed by tech giants, network links within regions are incredibly stable. Your system might run without a single major partition event for months or even years at a time.

The CAP theorem is entirely silent on what happens during normal, everyday operations. It provides absolutely zero guidance on how your system should behave when the network cables are perfectly healthy.

Think back to our earlier scenario with the servers scattered across New York and London. Imagine there are no network breaks. Everything is communicating perfectly fine. A user in New York updates their profile picture at the exact same time a relative in London opens their profile page.

The New York server wants to be fully mathematically consistent. So, it instantly sends the new picture data over the Atlantic Ocean to the London server. It waits for London to process the data and send back a digital confirmation that it successfully received the update. Only after all that travel time does the New York server tell the original user that the save was successful.

This waiting period is called latency. The communication across the physical ocean takes actual time, which is strictly limited by the literal speed of light through fiber optic cables. Because the digital system prioritized perfect data consistency across the globe, the New York user experienced a noticeable, frustrating delay when they clicked the save button.

This introduces a completely new trade-off that has nothing at all to do with network failures. You are now actively trading user performance for data accuracy. The CAP theorem does not cover this at all. That is an enormous blind spot for developers trying to build fast, highly responsive web applications.

Enter the PACELC Theorem

In 2010, a computer science professor named Daniel Abadi formally proposed an extension to the CAP theorem to fix this massive architectural blind spot. He called his new framework the PACELC theorem. While the acronym is admittedly a bit of a mouthful to pronounce, the underlying logic is absolutely brilliant for software engineers.

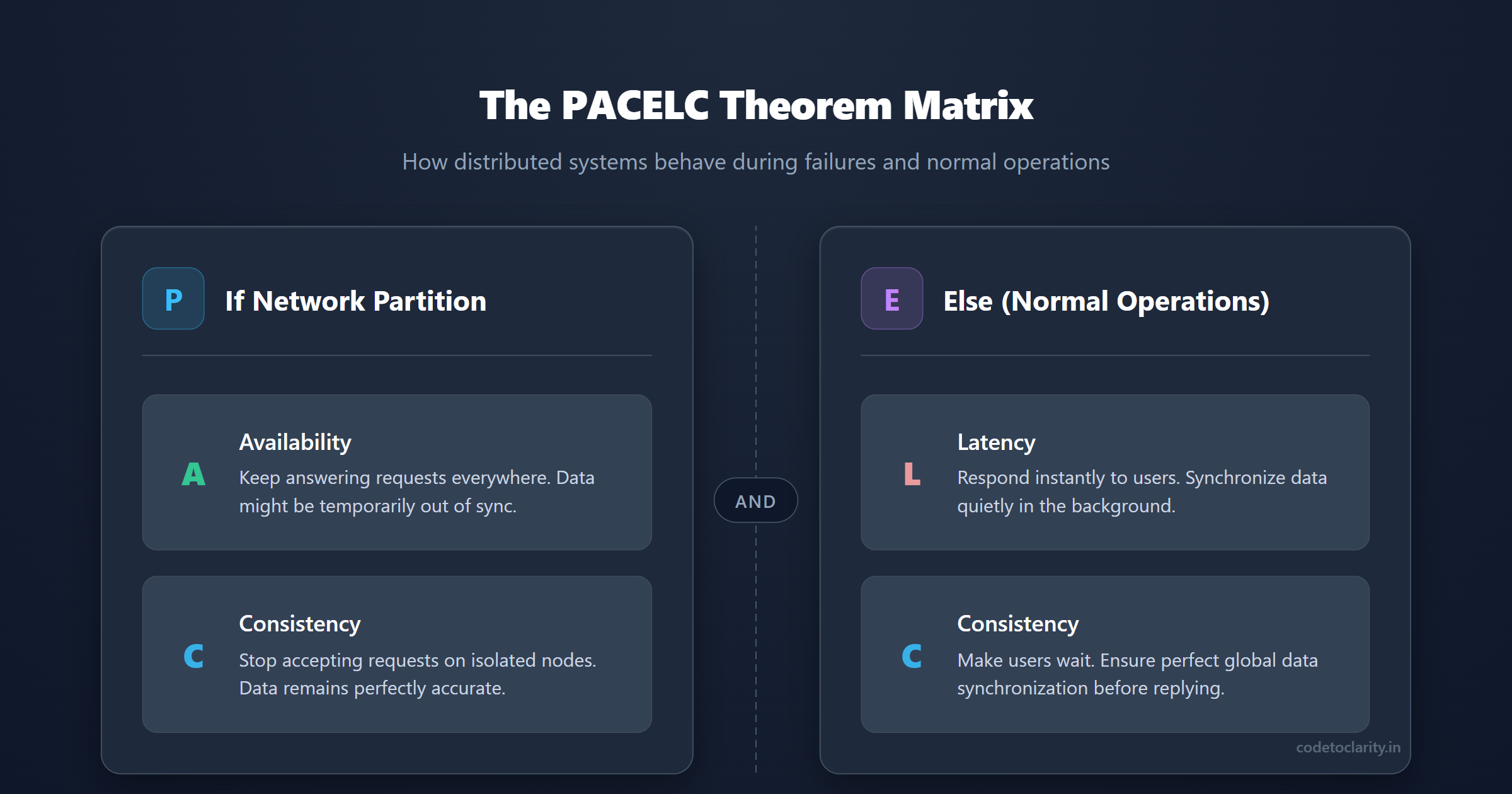

PACELC creates a complete architectural framework by splitting distributed system behavior into two distinct operational scenarios. It defines what happens when the network is broken and what happens when the network is completely fine.

Let us break down the acronym piece by piece.

The first part of the word is "PAC". This stands for: If there is a Partition (P), you must choose between Availability (A) and Consistency (C). This entire first half is literally just the original CAP theorem restated. It accurately acknowledges that when things break, you absolutely still have to make that original tough choice.

The second part of the word is where the true magic happens. The "ELC" stands for: Else (E), you must choose between Latency (L) and Consistency (C).

This brilliant "Else" clause covers the 99.9% of the time when your application is running beautifully and normally. It forces software developers to ask a highly critical business question. When the network is healthy, are we willing to make our users wait longer to ensure perfect global data synchronization (Consistency), or do we want to respond instantly and synchronize everything quietly in the background (Latency)?

By combining both of these different scenarios, PACELC gives system designers a realistic roadmap for evaluating modern databases. You no longer just plan for data center disasters. You also actively plan for everyday application performance.

Real-World Scenarios and Trade-offs

To truly grasp how PACELC directly influences application architecture, we need to look at real-world software products. Different commercial products have completely different business requirements, and PACELC helps us logically categorize those technical needs. Let us explore three diverse scenarios that modern developers regularly encounter.

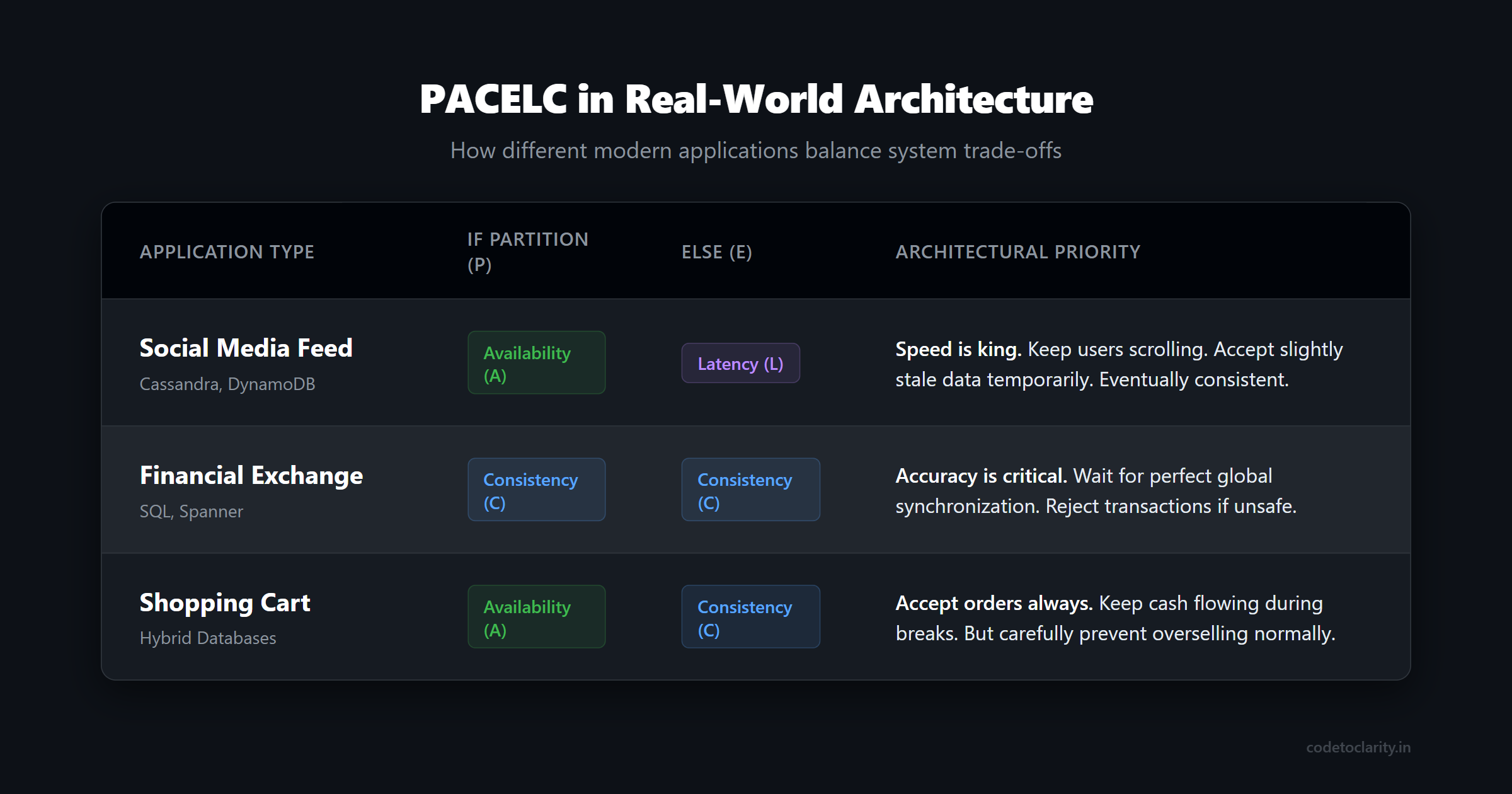

The Global Social Media Feed

Imagine you are the lead backend developer building a planetary-scale social network. Users are endlessly scrolling, casually liking random posts, and rapidly adding short comments. What is the single most important factor for this specific application? It is user speed and uninterrupted uptime.

If a regional server goes offline or the core network briefly splits, the mobile app must stay functional to keep users engaged. You desperately want users to keep endlessly scrolling, even if they are seeing a slightly older cached version of their timeline. Therefore, during a partition (P), you absolutely prioritize Availability (A).

Now consider normal everyday operations (E). When a user taps the small heart icon button to like a funny video, they naturally expect that heart icon to turn red instantly. If the backend system made them wait a full agonizing second while it strictly synchronized that newly added "like" across thousands of servers globally, they would assume the app was broken and abandon it.

Therefore, you heavily prioritize low Latency (L) over strict mathematical Consistency. It is perfectly fine if a user sitting in another country sees the video with one less like for a few fleeting seconds. The platform data will eventually become consistent in the background.

In the formal PACELC framework, this giant social network is categorized as a PA/EL system. It chooses Availability during network partitions and Latency during normal operations. Databases like Amazon DynamoDB or the widely utilized Apache Cassandra repository are utterly perfect for this exact architectural choice. They are built from the ground up to be highly available and incredibly fast, utilizing what is known in the industry as "eventual consistency".

The High-Stakes Financial Exchange

Now let us mentally pivot completely. You are the senior database developer for a global corporate banking platform or a frantic stock exchange. The stakes are understandably incredibly high. Every single cent must be accounted for perfectly, every single millisecond.

During a sudden network partition, you simply cannot risk a user withdrawing funds from an ATM located in London while their true account balance is currently locked inside a disconnected offline server in Frankfurt. Doing so could result in massive financial losses for the bank. It is much safer to simply display an error message and deny the active transaction. During a partition (P), you rigorously choose strict Consistency (C). You gracefully sacrifice system availability to legally protect financial integrity.

What happens during normal healthy operations (E)? You still cannot take any technical risks. When a user transfers ten thousand dollars, every single relevant database node in the cluster must securely agree that the transaction definitively occurred before offering the user a green success message. This strict multiphase synchronization means the user will experience slightly higher Latency (L) while the servers talk to each other.

In the banking industry, a stressed user will gladly wait three full seconds for a guaranteed secure transfer rather than get a scary instant response populated with legally questionable data. Thus, you actively prioritize aggregate Consistency (C) over Latency.

Under the PACELC rules, this banking application is a strict PC/EC system. It insists on total consistency no matter what the network is doing. Traditional SQL relational databases heavily configured with tight synchronous replication often fall neatly into this rigorous category. Another fascinating modern example is Google Spanner, a specialized cloud database explicitly designed to mathematically provide strong consistency even across global geographic distances.

The E-Commerce Shopping Cart

Sometimes, the choice is not entirely black and white. Major e-commerce retail platforms often creatively use hybrid database approaches to maximize profit while managing physical inventory properly.

Consider an active online shopping cart system during a holiday sale. You absolutely want the browsing system to remain fully available during a network partition. If users physically cannot add promotional items to their checkout cart, your company is heavily bleeding revenue every single second. You intelligently prioritize Availability (A) during network failures to keep the cash flowing.

However, during normal healthy operations, you need to manage the limited physical warehouse inventory quite accurately. If two aggressive shoppers try to rapidly buy the absolute final discounted laptop in stock, the inventory system needs a high degree of rigid consistency to actively prevent embarrassing overselling. So, you might lean much closer towards strict Consistency (C) when the network is healthy, carefully employing complex conflict resolution logic only when strictly necessary.

This unique combination successfully creates a PA/EC system. The overall architecture remains incredibly robust during unforeseen failures but constantly attempts to keep critical transaction data tight and meticulously organized during everyday normal use.

Applying PACELC to Modern .NET Architecture

Understanding PACELC is not just academic university trivia meant for boring textbooks. It directly impacts the software tools and managed cloud services you choose to implement when building your next big project in a robust C# ecosystem.

When you thoughtfully spin up a modern cloud database, you often have direct technical control over these exact PACELC trade-offs. A shining example of this developer flexibility is found within the Microsoft Azure platform. It offers incredible technical flexibility through its customizable Azure Cosmos DB consistency levels. Instead of forcing your application into a single rigid consistency model, Cosmos DB let you manually turn a digital dial.

You can specifically choose "Strong Consistency" tailored for your critical financial billing modules (EC), and then dial it way down to loose "Eventual Consistency" for your massive user activity tracking logs to maximize data ingestion speed (EL). You get the absolute best of both architectural worlds housed within the exact same database platform.

Let us dive into a concrete technical example within a standard professional ASP.NET Core environment. Imagine building a checkout service where user speed is your unquestionable priority.

public class CodeToClarityCheckoutService

{

private readonly IOrderRepository _orderRepo;

private readonly IRedisCache _codetoclarityCache;

public CodeToClarityCheckoutService(IOrderRepository orderRepo, IRedisCache cache)

{

_orderRepo = orderRepo;

_codetoclarityCache = cache;

}

public async Task<OrderResponse> ProcessOrderAsync(OrderRequest request)

{

// 1. We write the new user order to the primary regional database.

var newOrder = await _orderRepo.SaveOrderAsync(request);

// 2. We deliberately choose Latency (speed) over Consistency (C) for normal operation.

// We trigger an event to update global analytical servers, but importantly we do NOT await the response.

_ = SyncToGlobalAnalyticalServersAsync(newOrder);

// 3. We immediately update the local fast cache to ensure the user sees their order history instantly.

await _codetoclarityCache.SetAsync($"order_{newOrder.Id}", newOrder);

// 4. Return success instantly without waiting for the global geographic sync.

return new OrderResponse { Success = true, OrderId = newOrder.Id };

}

private async Task SyncToGlobalAnalyticalServersAsync(OrderData order)

{

// This simulates a slow transatlantic network pipeline method that we deliberately did not await.

await Task.Delay(3000);

}

}

In this practical code snippet, we are fully embracing the "EL" portion of the theorem. We are actively writing to a localized database and immediately returning a fast response to the web user. We fire off a background task to synchronize the heavy analytical data globally, completely accepting that our global tracking databases will be slightly logically inconsistent for a few seconds.

Application caching is another massive area where PACELC shines brightly. When you decide to implement a distributed cache system to radically speed up your application queries, you are actively choosing to prioritize latency over perfect database consistency. If you strategically harness a tool like the popular open-source StackExchange.Redis package accessed from NuGet, you are storing redundant data temporarily in incredibly fast hardware memory. The temporary cached data might briefly differ from your main permanent SQL database, but the read speed improvement is so dramatically noticeable that the trade-off is absolutely worth it for the end user.

As a professional software developer, your daily job is completely dependent on thoroughly understanding your specific unique application requirements. There is absolutely no universally perfect software database. There are only right and wrong engineering choices tailored strictly for your unique corporate context.

Conclusion

The classic CAP theorem acts as a truly fantastic starting point for learning about the intricate complexities of modern distributed systems. It logically teaches you that in the inherently chaotic and unpredictable world of internet server networking, you mathematically cannot have it all when things physically break. You are forced by physics to make hard compromises.

However, treating the generic CAP theorem as the ultimate architectural rulebook will leave your infrastructure painfully unprepared for the grueling reality of everyday performance tuning. The expanded PACELC theorem fills in that critical missing systemic half. It actively forces you to look deeply at your software application and ask the hard architectural questions. Are we technically optimizing for the absolute fastest possible user response times, or are we legally optimizing for perfect mathematical data accuracy?

By actively keeping the full PACELC framework in mind during your early planning phases, you will undoubtedly design significantly more resilient, highly performant, and deeply reliable web applications. You will transition from simply preparing to handle rare network disasters to truly architecting for immense daily success.

Kishan Kumar

Software Engineer / Tech Blogger

A passionate software engineer with experience in building scalable web applications and sharing knowledge through technical writing. Dedicated to continuous learning and community contribution.